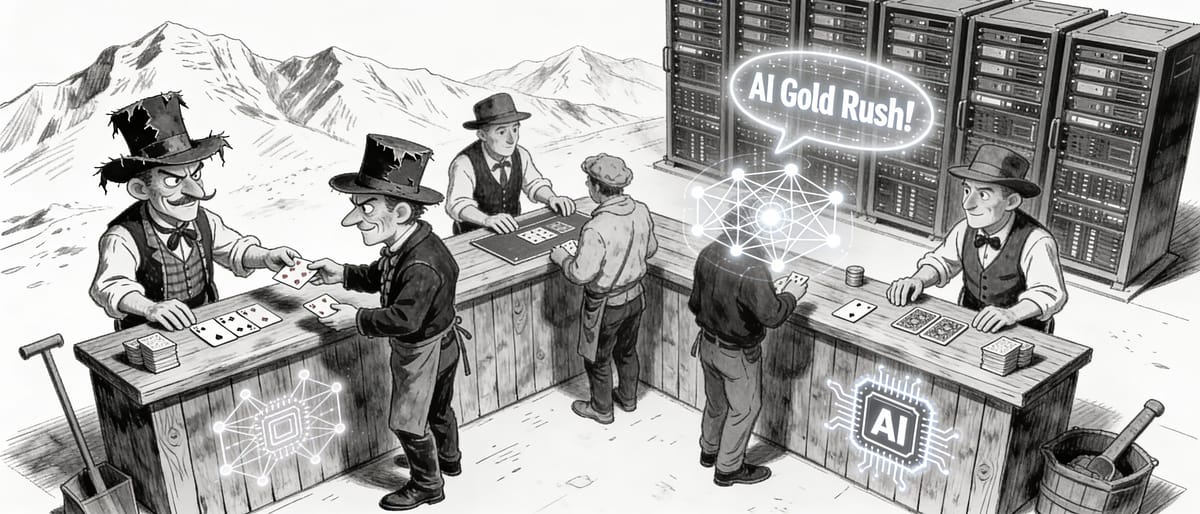

The AI Data Center Gold Rush

The $500 billion AI infrastructure boom is built on an assumption that may not survive contact with reality: that application revenue will eventually justify the investment.

THE ASSUMPTION: Infinite Compute, Guaranteed Returns

The belief is seductive: explosive, near-infinite demand for generative AI compute will justify a historic wave of CAPEX for hyperscale data centers and specialized GPU infrastructure. The logic? Owning the "picks and shovels" in this new gold rush is a guaranteed, low-risk path to generational wealth—because application-layer revenue will inevitably catch up to fill the capacity.

This assumption drives the biggest infrastructure boom in tech history.

THE REALITY CHECK: The Math Isn't Adding Up

A historic investment boom is undeniably underway. Data center CAPEX will exceed $500 billion by 2028, heavily weighted toward AI. Meta alone committed $35-40 billion for 2024. Microsoft, Google, and Amazon are making similar hundred-billion-dollar, multi-year commitments. Nvidia's valuation soared past $3 trillion.

But here's where the foundational pillars of economics start showing cracks:

The Revenue Gap

Generative AI applications like ChatGPT, Copilot, and Midjourney generate a fraction of the revenue needed to justify the infrastructure spend. OpenAI's annualized revenue is estimated in the low billions—while a single hyperscale data center costs over $1 billion to build and equip. The math doesn't scale.

The Utility Plateau

Initial explosive growth in consumer AI usage is maturing. Growth rates are slowing significantly, according to data from Similarweb and others. Enterprise adoption is real but measured, facing integration costs, accuracy issues ("hallucinations"), and unclear ROI.

/