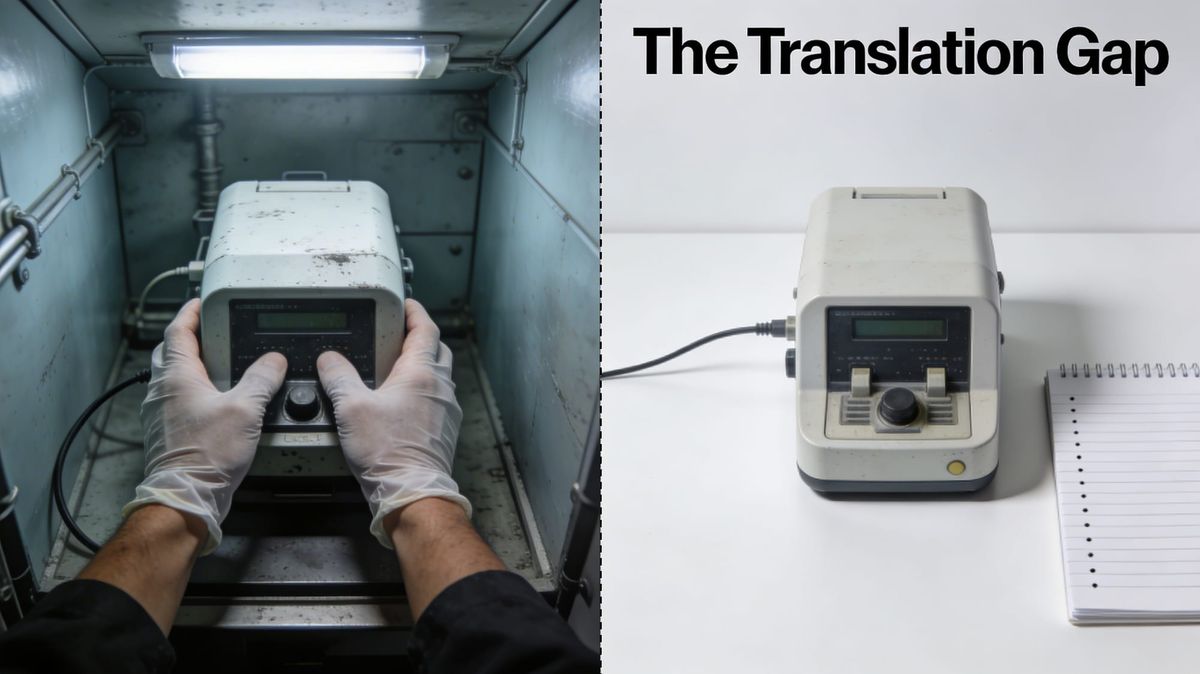

Lost In Translation

The Prototype Feedback Problem

The Assumption

The prototype works. The technical validation is done. Structured feedback sessions have taken place, notes are filed, the team has reviewed them. You are, by any reasonable measure, doing everything right.

The assumption driving the next phase: usability feedback is an input to the engineering queue. Document it, prioritise it, iterate on it. The process is working. The loop is closed.

"We have the feedback. We're on top of it."

This is where the failure begins — not with negligence, not with a broken process, but with a mistranslation that the documentation itself makes invisible.

The Reality Check

The founders in this failure mode are not dismissive operators. They are structured, technically serious, and genuinely committed to building something that works. That is precisely what makes the failure so hard to see — and so expensive.

But before examining the cognitive filter, it is worth being precise about what usability feedback actually looks like at the prototype stage. It is rarely a clean signal. In deep tech and hardware, much of the feedback arriving after an MVP demonstration is not about the product concept at all — it concerns process and operating environment. Users flag that the workflow does not match their documented procedures, that the device conflicts with existing ISO norms or internal SOPs. They report physical constraints: restricted movement in the operating environment, cumbersome materials, a form factor that works in the lab but not in the space where it will actually be used. They note that the prototype is unfinished — functional, but without the ergonomic refinements that would make it usable in a sustained way.

This category of feedback is legitimate and important. It is also the category most likely to be absorbed into the engineering queue as fixable line items — because it looks like a technical problem, and because it is one, in part.

The difficulty is that beneath this layer of environmental and procedural feedback, there is often a second signal: that the interaction model itself does not fit the user's reality. When these two signals are processed together through an engineering frame, the second one disappears. The team addresses the procedural friction and considers the session closed. The deeper question — whether the product concept fits how the user actually works — goes unasked.

Here is what that looks like in practice:

The user says: "The interface conflicts with our standard operating procedure for this task."

The engineer hears: Integration issue. Logs it as a configuration item. Assigns it to the compliance backlog — or escalates it as a change management task, assuming the SOP can be revised to accommodate the product.

The user means: "We would never run this device in the way you have designed it to be run."

The engineer reads: User education gap, or a change management problem. Response: updated workflow documentation, or a conversation with the user’s operations team about revising the SOP. What this reading misses is that many SOPs are not administrative conventions that can be updated on request. They exist because the process step they govern creates the value — or protects against a risk — that justifies the process in the first place. An SOP prescribing a specific sequence in a sterile environment, or a documented handover protocol in a safety-critical workflow, cannot be revised to fit the product without dismantling the logic of the process itself. The engineer reads a change management problem. The user is describing a fundamental incompatibility.

The feedback entered the system. It was accurately recorded. And then it was neutralised — not by dismissal, but by translation. Real signal was processed through the wrong frame, and the output was a technical fix queue filling up around an interaction model that remained untouched.

This is not a comprehension failure in any simple sense. The engineer understood the feedback. The engineer just categorised it as a problem with a different shape than the user intended.

The process worked. The translation didn't.

The Filter: How Good Data Becomes a Non-Decision

Every professional role carries a cognitive frame — a set of trained instincts about what kind of problem a given signal represents, and therefore what kind of response it calls for. This is not a flaw in the individual; it is how professional expertise works. A regulatory affairs specialist reading the same usability report will see a compliance exposure. A commercial lead will see a positioning problem. An engineer will see a systems problem with a technical solution. None of these readings is wrong in isolation. The difficulty arises when only one frame is applied to a signal that carries information relevant to all of them — and when that frame is the one most likely to convert a product concept question into a technical backlog item. When this frame meets the layered feedback typical of a prototype stage, three things reliably happen:

First-order effect

Usability issues are logged. The documentation is real. The team is aware. The procedural and environmental constraints are captured. The sprint backlog grows appropriately.

Second-order effect

Each logged item is re-categorised through an engineering lens: configuration gap, documentation deficiency, hardware constraint for next revision. The category determines the solution type. Solutions are technical. The interaction model does not move.

Third-order effect

The accumulation of documented, addressed, technically-resolved items creates a false administrative closure. The backlog shrinks. Velocity looks healthy. And the core question — whether the product concept fits how the user actually works — ships unanswered into the next phase.

The better the team's process discipline, the more complete this neutralisation becomes. A chaotic team might leave the signal visible in unresolved tickets. A structured team resolves everything — and buries the signal beneath the evidence of its own responsiveness.

The Three Assumptions

Three founder assumptions drive the cascade, each appearing reasonable in isolation, each compounding the one before.

Assumption 1 — "Usability feedback is an input to engineering, not a signal about the product concept."

This is the routing decision that sets everything else in motion. When usability feedback arrives after a technically successful prototype demonstration, the natural frame is: the product works, so the feedback describes what needs to be fixed, not what needs to be reconsidered.

The problem is that prototype-stage feedback contains multiple signal types that require different responses. Some of it genuinely is engineering input — the form factor needs refinement, the interface needs visual clarity, the device needs better thermal management. This category belongs in the technical backlog, and addressing it is the right response.

But a second category of feedback carries a different message: that the operating logic of the product does not match the logic of the user's actual working environment. This feedback frequently arrives disguised as the first type. A user who says the device conflicts with their SOP may be reporting an integration problem — or may be reporting that the fundamental interaction model was designed around assumptions about how the task is performed that do not hold in practice. The engineer hears the first reading. The second one does not reach the product decision layer.

What gets filed as "workflow integration issue" is sometimes a signal that the interaction model itself is wrong. The fix queue fills with legitimate, resolved technical items. The core structural question remains unasked.

The compounding dynamic: As resolved tickets accumulate and sprint velocity stays high, the team builds documented evidence of its own responsiveness. The underlying structural question becomes progressively harder to surface — not because anyone is hiding it, but because the system has categorised it out of existence.

Assumption 2 — "If they understood how to use it, they would see that it works."

Engineer-founders frequently interpret usability confusion as a knowledge gap in the user rather than a design gap in the product. The instinct is to explain, not to reconsider. This shows up in onboarding documentation, in how demos are framed, in investor updates that describe adoption curves rather than product problems.

The implicit assumption is that the engineer's model of the product is the correct one, and that users who struggle with it are working from an incomplete model. The solution is user education, not product change.

The compounding dynamic: The longer this assumption holds, the more the product is optimised for users who think like engineers. The gap between the designed user and the actual user widens with each iteration — while the documentation trail makes it look like progress.

Assumption 3 — "We have the feedback. We're on top of it."

The documentation of feedback creates a false sense of closure. The issue is named. The session happened. The notes are filed. Administrative completeness is mistaken for actual responsiveness. This is the most dangerous assumption because it is structurally reinforced by exactly the discipline that good founding teams apply.

The compounding dynamic: The better the feedback process, the more convincing the evidence that the team is listening — and the longer the reckoning is delayed. The runway shortens. The documentation grows.

Forensic Case: Humane AI Pin

Primary case · Deep tech hardware · Founded 2018, launched April 2024, acquired by HP February 2025 for $116M (sought $750M–$1B)

Humane was founded by Imran Chaudhri and Bethany Bongiorno — both former Apple, both with direct experience building the iPhone's interaction model. Of the 200 people Humane hired, 40% were Apple alumni. The engineering pedigree was exceptional. The device raised $230 million from investors including Sam Altman, Marc Benioff, SoftBank, Microsoft, and Qualcomm. And when it shipped in April 2024, it worked exactly as designed.

The AI Pin was a screenless wearable — worn on the chest, held together by magnets through the user's clothing, operated by voice and gesture, with a small laser projector that displayed output onto the user's palm. The interaction model required the user to raise their hand to a specific angle, hold it still while waiting for AI processing (response times ranged from slow to very slow), and interpret projected output in variable lighting conditions. The device had no app ecosystem, no integration with the user's existing phone, and required its own cellular subscription at $24 per month on top of the $699 hardware cost.

The usability problems were not discovered at launch. They were predictable from the interaction model and had been raised publicly by analysts before the device shipped. Bloomberg's Mark Gurman concluded before launch that the voice control and laser projection system made the product a "nonstarter for most people." The Verge noted the fundamental design constraints in early hands-on coverage. The signal was available.

The signal was also available internally. According to a New York Times investigation drawing on interviews with 23 current and former Humane employees, advisers, and investors, concerns about the device's viability and functionality were raised by employees during the development phase — and were dismissed. Despite these internal concerns, Humane never hired a head of marketing. The company targeted 100,000 unit sales in its first year. It shipped 10,000.

"Employees had raised concerns about the viability and functionality of the device during the development phase, but these concerns were dismissed." — New York Times, based on 23 current and former employees, advisers, and investors

This is the detail that transforms the Humane case from a story about an arrogant company into something more useful for this autopsy. The concern was not absent. It was present, documented through conversations with staff who later spoke to journalists, and it was neutralised before it could become a decision. The structured team processed the concern — and continued.

The public artifact that makes the filter visible arrived on 11 April 2024, when the first wave of reviews landed. The consensus across major outlets was damning: Engadget called the device "the solution to none of technology's problems"; The Verge wrote "not even close"; Marques Brownlee, whose review drew 8.5 million views, titled it "The Worst Product I've Ever Reviewed." The reviews did not describe a product with minor calibration issues. They described a product whose fundamental interaction model did not work for real users in real conditions.

Ken Kocienda — the engineer who built the iPhone's autocorrect keyboard, one of the most respected figures in mobile interaction design — posted a lengthy response on X that day. It is one of the rarest artifacts in the public record: a documented, real-time demonstration of the filter in action, from someone with no motive to obscure.

"I feel that today's social media landscape encourages hot takes… and the spicier the better! Indeed, it's so easy to find people online who are willing to jump on the skepticism bandwagon to gape at the same things you're pointing at and poke holes in every little detail." — Ken Kocienda, Head of Product Engineering, Humane, 11 April 2024

Read the structure of this response carefully, because it follows the cascade precisely. Kocienda opened by acknowledging that the Pin "can be frustrating sometimes" — the signal was received. He then noted that so can laptops and smartphones — the signal was normalised. He then drew the comparison to the early iPhone's touchscreen keyboard, which faced scepticism before users learned it — the signal was translated into an adoption curve problem. He closed by suggesting that users "will need to find out how the product fits" for them, and that he trusted his intuition.

The near-universal feedback from independent professional reviewers — people who had used the device in real conditions — was processed through an engineering frame and converted into a maturity and adoption problem. The product concept was not questioned. The interaction model was not reconsidered. The users, it was implied, would catch up.

The Inversion Test

What if the reviewers were not premature — but the product was?

If we invert Kocienda's read: what if the consistent friction reported across independent reviewers was not a sign that they had not yet learned the device, but accurate signal that the interaction model was not ready for the conditions in which it would be used? What evidence would the team have needed to set aside to maintain the belief that adoption was the problem?

The reviewers were not speculating. They had used the device. Multiple experienced technology reviewers, independently, reported the same specific failures: gestures not recognised, responses too slow for real interaction, laser projection unreadable in ambient light, basic tasks impossible to complete. This was not a calibration question or a learning curve question. It was evidence about whether the interaction model worked in uncontrolled conditions — the only conditions that matter for a consumer product.

The signal was categorised as social media noise. That categorisation protected the engineering frame — and delayed the product reckoning until there was no runway left to act on it. Humane sold fewer than 10,000 units before seeking a buyer. Between May and August 2024, more AI Pins were returned than purchased. HP acquired the company's software and IP for $116 million in February 2025 — against a valuation the company had sought of $750 million to $1 billion. The AI Pin device itself was discontinued. The technology continued to work exactly as designed.

Counterfactual Scenarios

Scenario A: If the internal employee concerns had been processed through the same structured feedback system as external user feedback

The NYT investigation surfaced that employee concerns about viability were raised and dismissed during development. This represents an earlier version of the same filter: internal signal routed through the engineering frame and categorised as solvable before it could become a decision. If a pre-defined threshold had existed — at what point does internal concern constitute a signal to stop rather than iterate? — the routing decision would have been contested. It was not.

Scenario B: If the team had defined in advance what volume or consistency of usability feedback would constitute a signal to reconsider the interaction model

Without a pre-defined threshold, every piece of feedback can be absorbed into the existing frame. The Humane team's implicit threshold was "when the adoption curve proves it" — which is a threshold that arrives after the runway is spent. A threshold set before launch — five independent reviewers reporting the same fundamental failure mode triggers an interaction model review — would have forced the question rather than deferring it.

Scenario C: If success were measured by task completion rate in uncontrolled real-world conditions rather than by technical performance in controlled demonstration

The AI Pin's internal performance metrics were engineering metrics: response latency, projection accuracy, gesture recognition rate under controlled conditions. User-facing metrics in realistic operating environments — how often a user successfully completed a task without frustration, in variable lighting, in motion, without preparation — were not the primary frame. Humane reportedly used ice packs to pre-cool the device before investor demos to manage the overheating problem. The metric that mattered was the demo. The metric that predicted failure was the unsupported real-world session. Changing the measurement changes what counts as a passing grade.

Open Questions

In Destruction Desk tradition: no answers. Only the questions worth sitting with before the next iteration.

On the signal beneath the signal

— Of the usability feedback we received in our last session, how much of it described environmental and procedural constraints — and how much was actually telling us something about the interaction model itself? Do we know the difference?

— If we removed all the feedback that can be resolved through configuration, documentation, or hardware refinement, what is left? Have we read that residual carefully?

On the filter

— What would a user with no knowledge of how our product works say about the last three usability issues we logged? Would their framing match ours?

— Have we ever changed the interaction model — not the interface, the model — based on user feedback? If not: what would that feedback need to look like for us to do so?

— Who in our founding team is positioned to hear a concern and route it to product decision rather than technical backlog? Is that person in the room when feedback is reviewed?

On internal signal

— Have any team members raised concerns about the product concept — not about implementation, but about whether the concept fits how the user actually works? How were those concerns categorised?

— If an employee were to raise a viability concern today, what would happen to it? What is the path from concern to decision?

On timing

— At what point in our development cycle will the cost of changing the interaction model become prohibitive? Have we already passed it?

— What would we need to see in the next feedback session to decide that the interaction model needs to be reconsidered — not refined? Have we written that threshold down?

On measurement

— If we measured success by unsupported task completion in the actual operating environment — not in a demo — would our prototype still pass?

— How would we know if the feedback we are collecting is a refinement signal or a product concept signal? What threshold would need to be crossed before we would reclassify it?

Destruction Desk

We perform autopsies on innovation’s failed assumptions.

This newsletter was edited by Manfred Lueth.

You received this email because you signed up for this newsletter from DestructionDesk.com. To stop receiving this newsletter, unsubscribe or manage your email preferences.