The MVP Trap

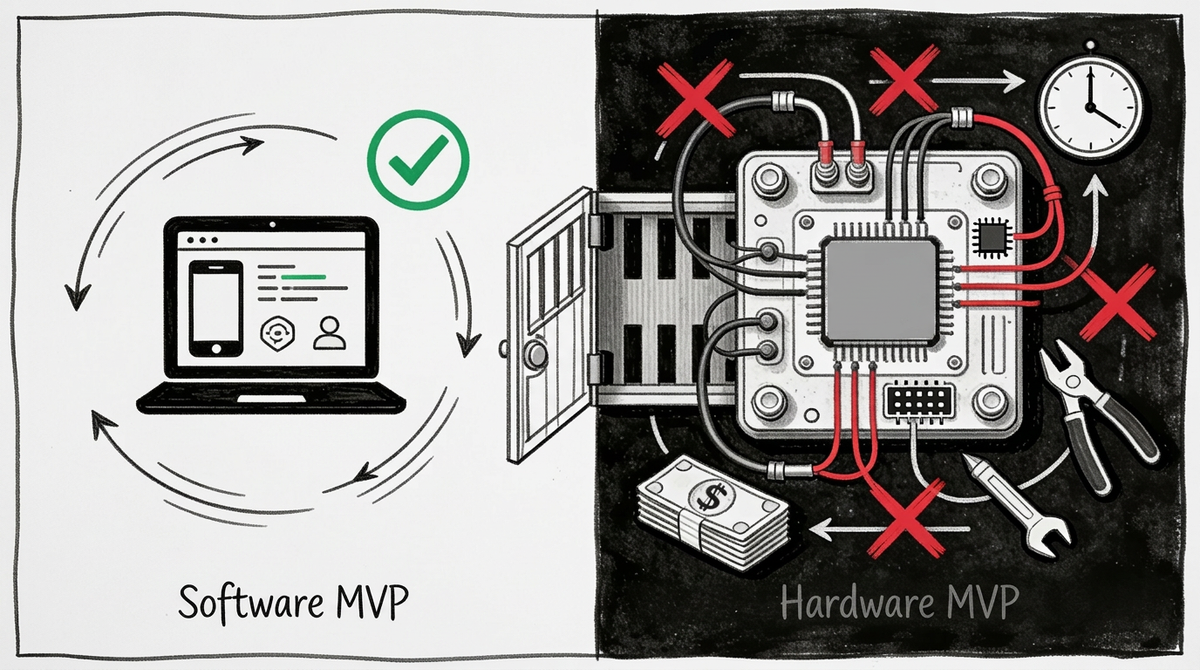

Why "build the minimum thing and learn fast" is the sentence that sends deep tech founders into the wrong valley entirely.

The Assumption

Somewhere between 2011 and 2015, the startup world reached a consensus. Eric Ries had published The Lean Startup. Y Combinator was producing a generation of founders trained to ship fast, measure ruthlessly, and treat failure as data. The doctrine had a name, a logic, and a track record: Minimum Viable Product. Build the smallest thing that tests your core assumption. Get it in front of users. Learn. Iterate. Repeat.

It worked. Spectacularly, visibly, repeatedly — in software.

And then, with the inevitability of a good idea applied too broadly, it migrated. Into hardware. Into deep tech. Into the laboratories and supply chains and regulatory environments where the word "minimum" collides with physics, and physics wins.

Today, the MVP model is so embedded in startup culture that questioning it feels almost reactionary — like arguing against iteration, against learning, against humility. Accelerators teach it. Investors expect it. Hackathons train it. And deep tech founders, many of whom come from engineering or science backgrounds where rigour and caution are virtues, find themselves in rooms where the received wisdom is: stop perfecting, start shipping.

This autopsy is not an argument against learning fast. It is an argument that "minimum" means something categorically different when your prototype weighs forty kilos, requires six weeks of supplier lead time, and fails in ways that cost more to diagnose than most seed rounds can absorb.

The assumption: MVP logic — build the minimum thing, ship it, learn from failure, iterate — transfers from software to deep tech hardware development.

The hidden sub-assumption doing most of the damage: "minimum" is a design choice. You can always do less.

In software, that is true. In hardware, physics disagrees.

The Time Horizon Mismatch

The MVP model was built for a specific set of conditions. It is worth naming them precisely, because the conditions are the argument.

In software:

- A meaningful iteration cycle runs from days to weeks

- The cost of a failed experiment is primarily engineer time

- Failures are reversible — a bad release can be patched, rolled back, or abandoned

- "Shipping" and "learning" happen in close temporal proximity

- The thing you ship at iteration 1 and the thing you ship at iteration 10 are made of the same material: code

In deep tech hardware:

- A meaningful iteration cycle runs from months to years

- The cost of a failed experiment includes materials, tooling, supplier qualification, and often facility access

- Failures at scale can be catastrophic and irreversible — a manufacturing defect discovered at volume costs orders of magnitude more than one found in the lab

- "Shipping" and "learning" are separated by supply chain timelines that no sprint velocity can compress

- The thing you ship at iteration 1 and the thing you ship at iteration 3 are often, physically, different objects — requiring requalification, re-testing, and re-approval at each stage

The temporal gap this creates is not merely inconvenient. It is structurally incompatible with the feedback loop the MVP model requires to function.

A software founder operating on a two-week sprint cycle gets 26 learning cycles per year. A hardware founder operating on a six-month iteration cycle gets two — and the second one depends on whether the first one generated enough insight, and enough remaining capital, to justify building the next version at all.

The MVP model's core promise — fail fast, learn cheap — rests on an implicit assumption that failing is cheap. In deep tech, the cheapest failure is still expensive. And the most common failure is not discovering that your idea was wrong. It is discovering that what you thought was "minimum" wasn't minimum at all — it was just the first layer of constraints you hadn't yet encountered.

The Reality Check

The problem is not that deep tech founders apply MVP logic naively. Many of them know, intellectually, that hardware is different. The problem is that the entire institutional environment surrounding them — accelerators, early-stage investors, pitch competitions, hackathons — is calibrated to reward MVP-style demonstrations, and to interpret the absence of a shipped prototype as a lack of founder momentum.

This creates a specific trap. The founder knows their "minimum" viable hardware prototype took eight months and €400,000 to build. The investor in the room has a mental model formed by software, where eight months means you could have shipped twelve times by now. The mismatch is not discussed. The founder learns to present their timeline in terms the investor finds legible. The investor learns to expect hardware milestones on software schedules. And the gap between expectation and reality gets papered over — until it doesn't.

What actually happens in the field is consistent enough to constitute a pattern:

The hardware startup raises a seed round on the strength of a working prototype. The prototype is real — it works, under the conditions in which it was tested. The investor, applying software intuitions, models 18 months to commercial launch. The founder, aware this is optimistic but dependent on the capital, accepts the terms. Twelve months in, the prototype works but the manufacturing process doesn't. The tolerances that were achievable in the lab are not achievable at the supplier. The component that costs €12 in prototype quantities costs €3 at volume — but the supplier won't quote volume pricing until you can commit to 10,000 units, and you can't commit to 10,000 units until you have customers, and you can't have customers until you can quote a price. The feedback loop the MVP model depends on has a minimum cycle time of 14 months. The runway has 6 months left.

This is not a founder execution failure. It is an assumption failure — one that was imported from a different game and applied to conditions where its core premises do not hold.

Who Profits When Founders Believe This?

The MVP model's migration into deep tech did not happen by accident. It was carried by institutional actors who benefit — not through malice, but through incentive structures that systematically reward the wrong signals in the wrong context.

Accelerators and programme managers whose output metric is "companies that shipped something" have a structural preference for the MVP model, because it produces visible, photographable, stage-ready demonstrations on a schedule that fits a cohort. The 14-month slog through supplier qualification and tolerance testing produces nothing that fits a graduation event. Accelerators are not incentivised to prepare deep tech founders for the real cost structure of hardware iteration — they are incentivised to produce compelling evidence of momentum. The two are not the same thing.

Early-stage investors whose fund reporting benefits from upward valuation marks have a rational preference for founders who ship fast, because shipping creates the narrative of progress that justifies the next mark-up. Whether the thing that shipped has been tested under operational conditions — whether the "minimum" viable prototype has encountered the failure modes it will encounter in deployment — is a question for the next fund. This is not cynicism. It is the logical consequence of a mismatch between the fund timeline and the technology development timeline.

The hackathon industrial complex — and this is worth dwelling on — actively trains a generation of founders to treat 48-hour shipping as the gold standard of execution quality. The model is coherent and, in its own domain, genuinely effective. But it transmits a set of reflexes that are optimised for software and actively counterproductive in hardware. More on this below.

Founder cost: While founders focus on shipping the minimum thing fastest, they systematically underinvest in three capabilities that determine whether they survive the hardware development phase: manufacturing readiness assessment, operational environment testing, and capital structure planning for a 24-to-48 month gap with no revenue. By the time the gap becomes visible, the runway is already short.

The Hackathon as Training Ground (for MVP?)

Last weekend I was at STARTPLATZ Cologne for their AI Coding Hackathon — more than 100 people, and an atmosphere that felt, as one participant put it, like family without the drama. By Friday night, teams who had never met were building until 2am. By Sunday afternoon, 27 projects were pitched from the stage — including an 11-year-old who presented his own and genuinely rocked the room, and a 14-year-old, Thimofej Zapko, who won the whole thing for the second time running. He told me that he previously build an n8n workflow that turned incoming emails into voice messages and let you reply the same way. Elegant, useful, immediately deployable. Within 48 hours of the final pitch, he had his first customer inquiry. His first sales call followed shortly after.

This is what the hackathon format does at its best — it compresses the distance between idea and evidence of demand into a single weekend. Every team, almost without exception, found a way to arrive at Sunday with something demonstrable: a working prototype, a live demo, a proof of concept narrow enough to be real. That shared instinct — ship something, however constrained — is the hackathon's core curriculum. The question worth asking is: for whom does it work, and under what conditions?

Because the 48-hour feedback loop that made Thimofej's project real is built on an assumption so obvious that nobody states it: that your MVP can actually be built in 48 hours. In software, that's a constraint on creativity. In deep tech hardware, it's a constraint on physics. And physics doesn't negotiate with sprint velocity.

The hackathon is not broken. It is brilliantly calibrated for what it was designed to do: train software-native founders to ship, validate, and iterate at speed. The problem is the assumption — widespread, unexamined, institutionally reinforced — that the instincts built at a hackathon transfer to hardware development. They don't. Not because deep tech founders are less capable, but because the feedback mechanism the hackathon trains — build, ship, observe, adjust — requires a cycle time that hardware's physical constraints structurally prevent.

A deep tech founder who internalises hackathon reflexes and applies them to hardware does not fail because they lack ambition or speed. They fail because they optimise for the wrong variable. Speed of iteration is the bottleneck in software. Capital efficiency per learning cycle is the bottleneck in hardware. These require different instincts, different planning horizons, and a fundamentally different definition of "minimum."

The event in Cologne was genuinely energising — and it surfaced a question that nobody in the room asked out loud: what would this weekend look like if your MVP weighed forty kilos and required six weeks of lead time?

The Forensic Analysis: What Actually Broke

Canoo: the MVP that proved the concept and missed the product

Canoo went public via SPAC in December 2020, raising $600 million on the strength of a distinctive prototype — a boxy lifestyle van that attracted genuine interest from Apple, Walmart, NASA, and the US Army. The order book eventually exceeded $3 billion. In January 2025, Canoo filed for Chapter 7 bankruptcy with less than $50,000 in assets. In 2024, the company delivered 22 vehicles.

The MVP logic worked perfectly: Canoo built the minimum thing that demonstrated the concept, generated demand signals, and unlocked capital. What the minimum thing did not — could not — demonstrate was manufacturing economics at volume, the capital structure required to bridge prototype and production, or the operational reliability needed to honour fleet contracts. The gap between the prototype and the product was not an execution failure. It was a structural consequence of treating a concept demonstration as proof of commercial viability.

The battery sector pattern

Between 2022 and 2025, fourteen grid-scale battery companies with Series A funding and largely validated technology failed before reaching commercial scale. The common pattern: technology that worked at prototype scale encountered interconnection queue delays averaging 38 months against modelled timelines of 12. The "minimum" viable demonstration had been optimised for investor legibility, not operational environment testing. The failure modes that killed these companies were not discoverable in the lab conditions under which the MVPs were built.

The cell therapy manufacturing wall

In 2024, seven cell therapy companies shut down despite FDA breakthrough designation. Not one failed on efficacy. All seven failed because their contract manufacturers could not scale production beyond 50 patient doses per quarter. The MVP — the clinical proof of concept — had validated the science. It had not validated the manufacturing system. In software terms: the prototype worked, but the infrastructure it depended on at scale was never stress-tested. In hardware terms: there is no patch for a CDMO that cannot hit volume.

The pattern across all three cases is identical. The MVP proved what it was designed to prove — that the core concept worked under controlled conditions. It did not prove, and was not designed to prove, what the company would eventually need: that the system works under the conditions in which it will actually operate.

Parallel Domain Evidence: They Stopped Arguing About This Twenty Years Ago

The startup world is not the first community to discover that "build fast and iterate" has limits when physical constraints enter the equation. Other domains encountered this wall earlier, hit it harder, and developed responses that the innovation ecosystem has largely chosen not to import.

Pharmaceutical development does not have an MVP model. It has Phase I, Phase II, and Phase III trials — a staged validation process in which each phase is explicitly designed to surface the failure modes the previous phase could not. The cost of this process is enormous. The alternative — shipping a minimum viable drug and iterating based on patient outcomes — is not considered. The reason is not regulatory conservatism. It is that the feedback loop from a failed drug iteration is measured in years, not weeks, and the cost of a late-stage failure is catastrophically higher than the cost of a rigorous early-stage gate.

Military procurement via OTA (Other Transaction Authority) contracts requires operational demonstration before purchase commitment. Not a prototype in a controlled environment — a system demonstrated under conditions approximating actual operational stress. The threshold is higher and slower than a demo day. It also produces a qualitatively different kind of proof: not "this works" but "this works under the conditions in which it will need to work."

Aerospace hardware development operates on a build-test-fix cycle that explicitly treats each iteration as a risk-reduction exercise, not a speed exercise. The goal is not to ship the minimum thing fastest. The goal is to surface the highest-consequence failure modes at the lowest-cost stage of development. The cadence is determined by the cost structure of failure, not by the investor's appetite for visible progress.

None of these domains found a better answer than the MVP model. They found that the MVP model's core premise — that iteration is cheap — does not hold when physical constraints, regulatory gates, and catastrophic failure modes are present. The startup world knows this. It has chosen, for institutional and incentive reasons, to keep teaching the MVP model anyway.

Second-Order Consequence Cascade

The MVP model's migration into deep tech does not just create immediate problems. It creates delayed failures that are harder to attribute — and therefore harder to learn from.

First-order effect: the MVP approach produces a demonstrable prototype that unlocks a funding round on a compressed timeline.

Second-order effect: the funding round is structured around software-style milestones — 12 to 18 months to commercial launch — that are incompatible with hardware development cycles. The founder accepts these terms because the alternative is no capital at all.

Third-order effect: when the milestones are not reached, the founder is characterised as having "execution problems" or having "lost focus." The structural mismatch between the funding instrument and the development reality is not recorded as the cause of failure. The next deep tech founder receives the same advice from the same investors — because the assumption that generated the failure was never surfaced.

The MVP approach worked, in the narrow sense that it secured the funding round. That is precisely why the cycle repeats.

Open Questions

This section does not offer answers. It offers questions that the standard narrative makes easy to avoid.

On measurement:

- If you mapped your current development stage against your funding runway, at what point does your capital run out before your next hardware iteration is complete?

- What failure modes does your current prototype not yet know about — and what would it cost to surface them before your investors do?

- How many learning cycles does your capital buy you, given your actual hardware iteration timeline? Is that number sufficient to reach the insight you need?

- What would you need to demonstrate — beyond a working prototype — to know whether your technology is actually ready for the next development stage?

On incentives:

- Who in your current ecosystem benefits from you believing that the fastest path through hardware development is the best one?

- If your lead investor's fund structure runs to seven years and your development timeline runs to ten, who is bearing the risk of that mismatch, and has it been named?

- What becomes harder to fundraise if the real cost structure of hardware iteration is made explicit in your pitch?

- Which intermediaries in your ecosystem disappear if deep tech founders stopped optimising for demo-day legibility?

On timing:

- The MVP doctrine was largely formed between 2010 and 2015, in a software context, at near-zero interest rates. What changed in your sector that would require different advice?

- If your manufacturing process is not yet defined, what exactly are you scaling?

- Was "fail fast" ever actually correct for hardware, or just frequently repeated by people whose mental models were formed in software?

On the AI simulation question:

- If AI-assisted simulation can compress the cost of surfacing physical failure modes — running thousands of virtual stress tests before a single physical prototype is built — does that change what "minimum" means in deep tech hardware?

- Or does it create a new layer of assumption about what the simulation captured, and what it missed?

- Which failure modes are structurally invisible to simulation, and how would a founder know which category their critical unknowns fall into?

- Is the bottleneck in deep tech hardware iteration the cost of physical experiments, or the quality of the questions those experiments are designed to answer? And does AI help with both, or only one?

On comparison:

- Which companies in your sector successfully crossed from prototype to product — and what did they have at the prototype stage that made the crossing possible?

- Which companies had better prototypes, more capital, and stronger order books than you — and still did not make it?

- If you designed your development process around the assumption that "minimum" is a physics problem, not a design choice, what would you do differently starting tomorrow?

A Final Note on the Limits of This Autopsy

This analysis does not argue that the MVP model is wrong, that hackathons are harmful, or that speed of iteration is irrelevant. It argues that the MVP model is more suitable for a specific set of conditions — fast cycle times, reversible failures, low iteration costs — and that applying it to deep tech hardware requires translating not just the vocabulary but the underlying logic.

"Minimum" in software is a question about scope. "Minimum" in hardware is a question about physics. The founders who navigated the hardware development valley successfully were not slower or more cautious than the ones who didn't. They were more precise about what their minimum viable prototype had actually proven — and more honest about what it hadn't. That precision is not pessimism. It is just accuracy about where you are, and what it will cost to get to where you need to be.

The MVP model gave founders permission to stop over-engineering and start learning. Deep tech needs a successor concept — one that preserves the learning orientation while replacing the iteration-speed assumption with calibrated to the acual cost structure of physical development.

It helps if on the other side, there are people who are aware of this as it always about expectation management, isn't it?

Tags: #STARTPLATZ #AIHackathon #Deeptech

Destruction Desk

We perform autopsies on innovation’s failed assumptions.

This newsletter was edited by Manfred Lueth.

You received this email because you signed up for this newsletter from DestructionDesk.com.

To stop receiving this newsletter, unsubscribe or manage your email preferences